Choosing between edge computing vs cloud computing can feel overwhelming when every platform claims to be faster, smarter, and more scalable than the rest. If you’re here, you’re likely trying to understand which approach best fits your infrastructure, performance, security, or machine learning needs—and how that decision will impact your digital strategy moving forward.

This article breaks down the core differences, real-world use cases, cost considerations, and performance trade-offs between edge and cloud environments. We’ll explore how latency, data processing demands, compliance requirements, and AI workloads influence the right choice for modern organizations.

Our insights are grounded in current industry research, hands-on testing across distributed systems, and analysis of evolving machine learning frameworks and device-level architectures. By the end, you’ll have a clear, practical understanding of when to rely on centralized cloud power, when to deploy edge solutions, and how to design a hybrid model that maximizes efficiency and scalability.

Centralized vs. Decentralized: The Core of Modern Data Processing

In today’s infrastructure debates, edge computing vs cloud computing isn’t about superiority—it’s about fit. Cloud computing centralizes workloads in hyperscale data centers (think AWS us-east-1 or Frankfurt regions), offering elastic storage and machine learning pipelines. Edge computing processes data near its source—like factory-floor IoT sensors in Detroit or 5G towers in Seoul—reducing latency and bandwidth strain.

Some argue cloud alone is cheaper. True, at scale. But latency-sensitive apps—autonomous vehicles or real-time fraud detection—can’t wait milliseconds.

| Use Case | Best Fit |

|---|---|

| Batch analytics | Cloud |

| Real-time robotics | Edge |

| Hybrid SaaS apps | Both |

Choose based on workload, compliance, and response time.

Understanding Cloud Computing: The Power of Centralized Resources

At its core, cloud computing is the delivery of computing services—like storage, processing power, and software—over the internet from centralized data centers. Think of it like a power plant: instead of every home generating its own electricity, everyone taps into a shared grid (no noisy generators required).

Scalability & Elasticity mean businesses can instantly expand or shrink resources based on demand. For example, Netflix scales servers during major releases to handle traffic spikes. According to Gartner, global public cloud spending surpassed $590 billion in 2023, largely due to this flexibility.

Cost-Effectiveness comes from pay-as-you-go pricing. Companies avoid massive upfront hardware investments and only pay for what they use—similar to a utility bill.

Centralized Management simplifies updates, backups, and cybersecurity from a single dashboard.

- Pro tip: Regularly audit cloud usage to prevent paying for idle resources.

In debates around edge computing vs cloud computing, cloud remains dominant for large-scale data processing due to its centralized efficiency and proven reliability.

Exploring Edge Computing: Processing Power Where It’s Needed

I first saw the power of edge computing during a factory demo where a robotic arm froze because cloud latency spiked (not exactly confidence‑inspiring when machinery is involved). That moment made the concept real.

Edge computing is a distributed computing paradigm that brings computation and data storage closer to where data is generated. Instead of sending everything to distant servers, devices process information locally.

Key advantages include:

- Reduced Latency: Real-time systems like autonomous vehicles or smart cameras can’t wait milliseconds for a round trip to the cloud.

- Bandwidth Conservation: Only essential insights travel upstream, cutting network strain.

- Enhanced Privacy & Security: Sensitive health or financial data stays on-site.

In debates around edge computing vs cloud computing, critics argue cloud systems are simpler and cheaper to manage. That’s sometimes true. But when speed, reliability, or privacy truly matter, pushing compute power closer to the source isn’t just efficient—it’s necessary.

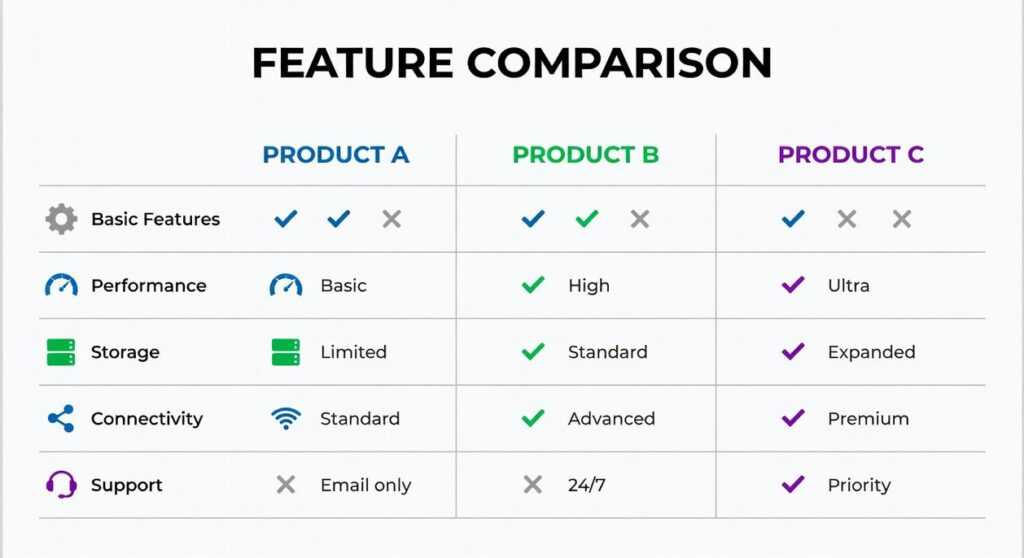

Head-to-Head Comparison: A Feature-by-Feature Breakdown

When choosing between cloud and edge setups, what actually matters to you—speed, cost, control? Let’s break it down so you can decide with confidence.

| Feature | Cloud | Edge |

|---|---|---|

| Latency | Higher |

Ultra-Low |

| Bandwidth Usage | High | Low |

| Scalability | Massive, Centralized | Distributed, Incremental |

| Cost Model | OpEx | CapEx + OpEx |

| Data Security | Centralized teams | Local processing, device-level risk |

Latency: Cloud systems rely on distant data centers, which adds delay. That lag (even milliseconds) can disrupt real-time feedback in autonomous vehicles or AR gaming. Edge processes data near the source, delivering ultra-low latency. Ever noticed how a slight delay ruins a video call? Now imagine that delay in robotic surgery.

Bandwidth Usage: Cloud requires constant data transfer, increasing bandwidth costs. Edge filters and processes locally, sending only essential data. Less traffic, lower bills.

Scalability: Cloud offers massive centralized scaling—spin up servers instantly. Edge grows incrementally by adding devices. Which feels more practical for your setup?

Cost Model: Cloud runs on Operational Expense (OpEx), meaning recurring payments. Edge demands upfront hardware (Capital Expense, or CapEx) plus maintenance. Prefer predictable subscriptions or long-term infrastructure investment?

Data Security: Cloud benefits from centralized security teams (a major advantage). Edge reduces transit risks but introduces physical device vulnerabilities.

Still unsure about edge computing vs cloud computing? Start with foundational clarity by reading https://llusyep.com/understanding-cloud-computing-architecture-in-simple-terms/.

Ultimately, your choice depends on priorities—speed, scale, or strategic control.

Practical Applications: Real-World Use Cases for Edge and Cloud

Cloud computing has become the backbone of modern digital infrastructure. According to Gartner, global public cloud spending is projected to surpass $600 billion in 2024, underscoring its dominance. Big Data Analytics and Machine Learning workloads thrive here because centralized data centers offer virtually unlimited compute for training complex models. For example, OpenAI and other labs rely on hyperscale clusters to train large language models.

Meanwhile, SaaS applications and web hosting depend on elastic scaling to handle traffic spikes during global events (think ticket launches or Black Friday). Data archiving and disaster recovery also benefit, as distributed backups reduce downtime; IBM reports that the average data breach cost reached $4.45 million in 2023, making resilient storage a financial imperative.

However, not every workload belongs in distant data centers. That is where edge computing shines. Autonomous vehicles and drones require millisecond-level decisions for navigation and object avoidance; latency measured in seconds would be catastrophic. Similarly, smart factories process industrial IoT sensor streams locally to enable predictive maintenance, cutting downtime by up to 30% according to McKinsey.

In retail, in-store analytics can analyze customer traffic patterns on local devices, preserving privacy while still generating actionable insights. The debate around edge computing vs cloud computing often frames them as rivals. Yet, evidence shows hybrid architectures deliver the best outcomes, blending real-time responsiveness with scalable intelligence. Pro tip: map latency, bandwidth, and compliance requirements before choosing an architecture. Context determines the optimal deployment model strategy.

Choosing Your Architecture: A Decision-Making Framework

You now have the criteria to choose between edge computing vs cloud computing for any project. I learned this the hard way when a retail app I helped deploy crashed during a flash sale (think Black Friday chaos).

Ask three questions: How critical is real-time processing? What are your BANDWIDTH limits? Where must data reside for security or compliance?

Some argue the cloud alone scales. Others swear by the edge. Reality: the future is a HYBRID—edge handles instant decisions, cloud manages analytics and storage.

Pro tip: map latency, bandwidth, and security to workload.

Make the Right Move for Your Infrastructure

You came here to clearly understand edge computing vs cloud computing — and now you have a practical, side‑by‑side view of how each approach impacts speed, scalability, cost, and control. The confusion around latency, security trade‑offs, and infrastructure complexity can stall real progress. But with the distinctions now clear, you’re in a position to make a smarter, strategy‑driven decision.

The real pain point isn’t choosing one over the other — it’s choosing the wrong architecture for your workload, users, or growth plans. Whether you need real‑time processing at the edge or elastic power in the cloud, aligning your infrastructure with your performance goals is what prevents bottlenecks, downtime, and wasted spend.

Now it’s time to act. Evaluate your application demands, audit your latency and compliance requirements, and implement the model that supports your long‑term scale. If you want proven frameworks, practical tech breakdowns, and step‑by‑step device and AI strategy guidance trusted by forward‑thinking builders, explore our expert resources today and stay ahead of the curve. The right infrastructure decision starts with informed action.

Head of Machine Learning & Systems Architecture

Justin Huntecovil is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to digital device trends and strategies through years of hands-on work rather than theory, which means the things they writes about — Digital Device Trends and Strategies, Practical Tech Application Hacks, Innovation Alerts, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Justin's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Justin cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Justin's articles long after they've forgotten the headline.

Head of Machine Learning & Systems Architecture

Justin Huntecovil is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to digital device trends and strategies through years of hands-on work rather than theory, which means the things they writes about — Digital Device Trends and Strategies, Practical Tech Application Hacks, Innovation Alerts, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Justin's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Justin cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Justin's articles long after they've forgotten the headline.