If you’re searching for a clear, practical docker container deployment guide, you likely want more than theory—you want a step-by-step path you can follow with confidence. Deploying containers can feel straightforward at first, but misconfigured environments, networking issues, and scaling challenges quickly turn simple setups into complex problems.

This article is designed to match that intent exactly. We’ll walk through the essential stages of Docker container deployment, from preparing your environment and building optimized images to configuring orchestration, security, and monitoring for production readiness. Each section focuses on actionable steps and proven best practices, not abstract concepts.

The guidance here is grounded in hands-on experience with modern infrastructure workflows, container security standards, and real-world DevOps pipelines. By the end, you’ll have a structured deployment approach you can rely on—whether you’re launching a single service or managing a scalable containerized application in production.

From Dockerfile to production, most teams obsess over orchestration. I disagree. The real leverage lives inside the image. A Dockerfile is a build manifest—meaning a repeatable recipe for assembling containers. Yet developers still accept bloated layers and slow CI as “normal.” They’re not. Use multi-stage builds, trim base images, and cache dependencies deliberately. Smaller images ship faster and reduce attack surface, which studies link to fewer vulnerabilities (Docker, 2023). Some argue Kubernetes will fix inefficiencies; it won’t. Garbage in, garbage out. Follow docker container deployment guide mindset: optimize early.

Stage|Focus

Build|Slim layers

Ship|Immutable tags

Run|Health checks

Mastering the Foundation: Building Lean and Secure Docker Images

Shipping bloated containers is like deploying a production app with debug mode on (it works… but should it?). Let’s tighten things up with practical steps you can apply today.

Choose the Right Base Image

Your base image is the starting filesystem your container builds on. Smaller base images reduce both size and attack surface (the total number of exploitable entry points).

Common options:

- Alpine – Extremely small (~5MB), great for simple apps. May require extra setup for glibc-based binaries.

- slim-buster – Debian-based, balanced between compatibility and size.

- Distroless – No package manager or shell; minimal runtime-only environment.

Decision framework:

- Need maximum compatibility? Start with slim.

- Comfortable managing dependencies? Use Alpine.

- Production-hardened runtime? Choose distroless.

Pro tip: Scan base images regularly with tools like Trivy to catch known CVEs (Common Vulnerabilities and Exposures).

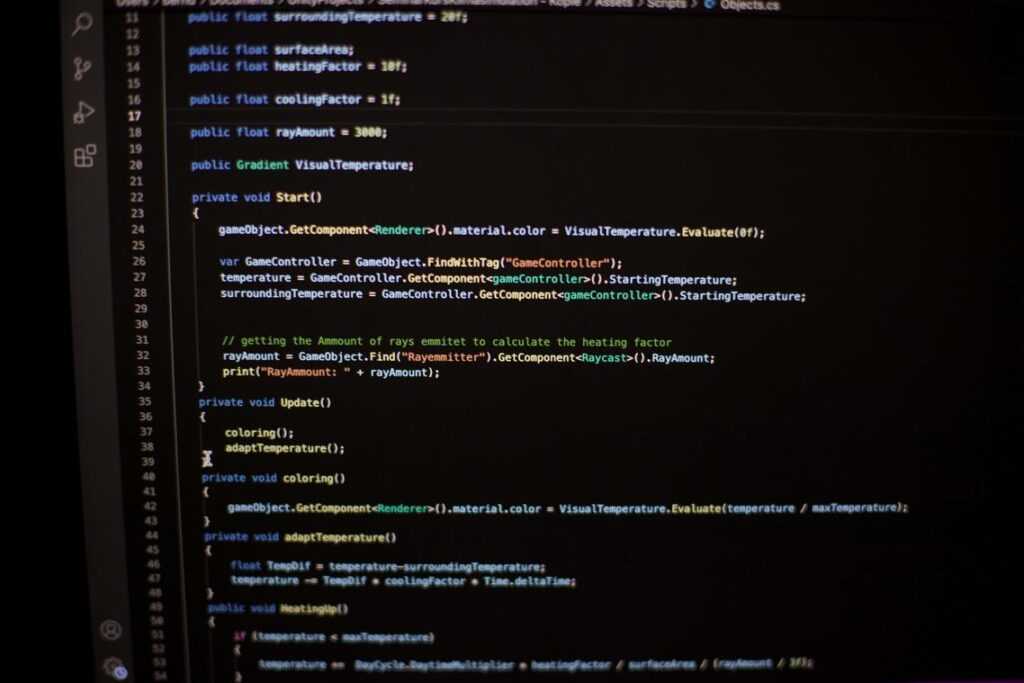

Implement Multi-Stage Builds

Multi-stage builds separate build dependencies from runtime artifacts.

FROM node:18 AS builder

WORKDIR /app

COPY package*.json ./

RUN npm install

COPY . .

RUN npm run build

FROM gcr.io/distroless/nodejs18

WORKDIR /app

COPY --from=builder /app/dist ./dist

CMD ["dist/server.js"]

The final image contains only compiled output—no npm, no source clutter.

Optimize Layering and Caching

Docker caches layers sequentially. Place rarely changing files first:

- COPY package.json before source code

- RUN dependency installation early

- COPY app source last

This minimizes rebuild time when code changes.

Leverage the .dockerignore File

A .dockerignore file excludes unnecessary files from the build context.

Include:

- node_modules

- .git

- *.env

- test directories

This speeds builds and prevents leaking secrets. If you’re following a docker container deployment guide, ensure .dockerignore is part of your checklist.

Lean images deploy faster, scale cleaner, and fail less dramatically (which is the goal).

Accelerating the Pipeline: Strategies for Faster Builds and Pushes

Speed in CI/CD isn’t just about convenience—it’s about tightening the feedback loop so developers can fix issues before context disappears (and before coffee gets cold). So, how do you actually accelerate the pipeline?

Parallelize Your CI/CD Workflow

First, run build and test jobs in parallel. In GitHub Actions or GitLab CI, you can define independent jobs that execute simultaneously instead of sequentially. For example, unit tests, linting, and security scans don’t need to wait on each other. Parallelization reduces total pipeline time dramatically—especially in larger repositories.

If you’re setting up from scratch, this pairs naturally with an introduction to devops cicd pipeline setup tutorial to structure stages properly from day one.

Use a Remote Build Cache

Next, consider a remote build cache. A build cache stores intermediate image layers so they can be reused instead of rebuilt. Tools like Docker BuildKit and registry-based caches allow teams and CI runners to share layers. The result? Faster builds across environments.

Pro tip: structure your Dockerfile so rarely changed steps (like dependency installs) appear early—this maximizes cache hits.

Optimize Registry Pushes

Then, optimize your image pushes. Docker only pushes changed layers, so slimming images directly speeds up deployment. Smaller base images (like Alpine) and multi-stage builds reduce size and bandwidth usage. This becomes critical in distributed teams or hybrid cloud setups.

Consider BuildKit

Finally, enable BuildKit. It supports parallel build execution and smarter caching. In many cases, teams see noticeable time reductions immediately.

What’s next? Once builds and pushes are fast, focus on deployment automation—your docker container deployment guide should evolve alongside your pipeline optimizations.

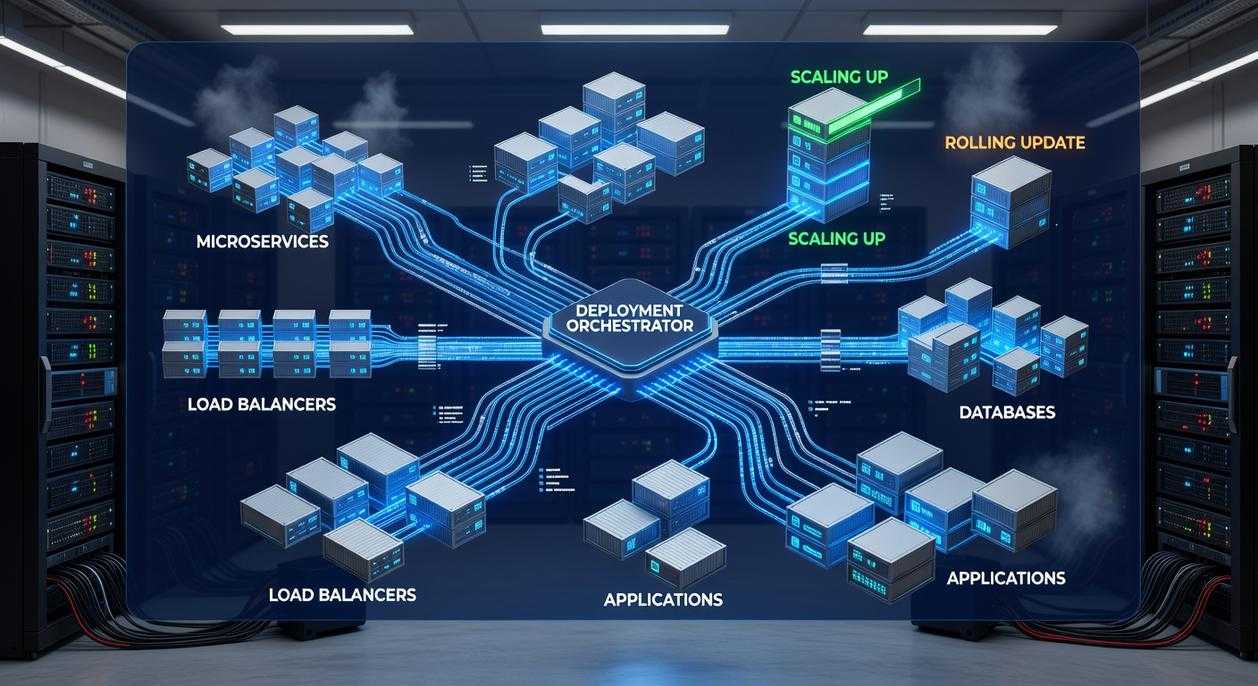

Intelligent Deployment: From a Single Host to Scalable Clusters

Choosing Your Deployment Target

Start simple. If you’re building locally or running a small internal tool, use Docker Compose. It’s lightweight, readable, and perfect for defining multi-container apps without operational overhead. Think of it as training wheels that still get you moving fast.

But for production? I strongly recommend Kubernetes or Amazon ECS. Once you need auto-scaling, self-healing, or multi-host networking, Compose becomes limiting. Some argue orchestration is overkill for small teams—and they’re not wrong. Complexity is real. Still, if uptime and growth matter, an orchestrator pays off quickly.

If you’re unsure, follow a practical docker container deployment guide and map your needs against scaling expectations before committing.

Implement Zero-Downtime Deployments

Downtime erodes trust (and revenue). Use rolling updates, where containers are replaced gradually, or blue-green deployments, where a new version runs alongside the old before switching traffic.

Orchestrators automate this. Kubernetes, for example, shifts traffic only when new pods pass readiness checks. My recommendation: default to rolling updates; reserve blue-green for major releases where rollback speed is critical.

Master Resource Management

Always define CPU and memory requests (guaranteed minimums) and limits (maximum usage caps). Without them, one noisy container can monopolize the host. Yes, some developers skip this during testing—but in production, that’s gambling.

Set conservative limits first. Monitor. Adjust upward only when metrics justify it.

Health Checks Are Non-Negotiable

Configure liveness probes (restart broken containers) and readiness probes (control traffic flow). This creates a self-healing system—one that detects and corrects failure automatically.

If you do only one thing from this guide, do this.

Putting Efficiency into Practice: Your Action Plan

Efficient Docker deployment isn’t a one-time tweak—it’s a habit. Think of it like going to the gym: one salad won’t help, but consistent reps will. In container terms, that means continually refining images, tightening builds, and tuning runtime configs.

The pain is real. Bloated images, sluggish CI/CD pipelines, and loose security settings act like a silent subscription fee you never agreed to (and somehow can’t cancel). They drain velocity and inflate infrastructure costs.

The fix? Lean images, faster pipelines, smarter orchestration. Follow a proven docker container deployment guide and focus on practical wins.

| Area | Inefficient State | Optimized State |

|——|——————|—————-|

| Images | 1GB+ base layers | Minimal, slim builds |

| Builds | 10+ minute waits | Parallel, cached stages |

| Runtime | Overprovisioned | Right-sized resources |

Here’s your challenge: convert one core service to a multi-stage build this week. Measure the image size and build-time drop. Small tweak, big win (cue dramatic before-and-after montage).

Master Your Next Deployment with Confidence

You came here looking for clarity on containerization and a practical path forward. Now you understand how to structure, configure, and optimize your environment with a docker container deployment guide that removes guesswork and reduces costly mistakes.

Deployment issues, scaling failures, and inconsistent environments can stall innovation and waste valuable time. With the right framework in place, those pain points turn into streamlined releases, predictable performance, and faster iteration cycles.

The next step is simple: put this into action. Audit your current setup, implement the deployment best practices outlined above, and standardize your workflow so every release is smooth and repeatable.

If you’re ready to eliminate deployment friction and build with confidence, start applying this docker container deployment guide today. Thousands of tech professionals rely on proven, structured deployment strategies to ship faster and smarter—now it’s your turn. Take control of your container strategy and deploy with precision.

Head of Machine Learning & Systems Architecture

Justin Huntecovil is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to digital device trends and strategies through years of hands-on work rather than theory, which means the things they writes about — Digital Device Trends and Strategies, Practical Tech Application Hacks, Innovation Alerts, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Justin's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Justin cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Justin's articles long after they've forgotten the headline.

Head of Machine Learning & Systems Architecture

Justin Huntecovil is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to digital device trends and strategies through years of hands-on work rather than theory, which means the things they writes about — Digital Device Trends and Strategies, Practical Tech Application Hacks, Innovation Alerts, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Justin's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Justin cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Justin's articles long after they've forgotten the headline.