If you’re searching for clear, actionable insight into where AI and robotics are headed next, you’re likely overwhelmed by headlines, hype cycles, and fragmented updates. This article is built to cut through that noise by focusing specifically on innovation signals in ai and robotics—the early indicators, technical breakthroughs, funding shifts, and product deployments that reveal where real momentum is building.

Instead of repeating surface-level trends, we analyze core machine learning frameworks, emerging hardware capabilities, and practical deployment strategies shaping digital devices and intelligent systems. Our insights are grounded in continuous monitoring of research releases, developer ecosystems, patent activity, and enterprise adoption patterns.

In the sections ahead, you’ll discover which signals point to scalable opportunity, which advances are still experimental, and how to interpret these developments strategically—whether you’re building, investing, or integrating AI-driven solutions. The goal is simple: give you clarity, context, and confidence in navigating the rapidly evolving world of AI and robotics.

Beyond the Screen: Where AI Meets the Physical World

AI is no longer confined to chatbots and image generators. It now drives autonomous robots, smart factories, and surgical assistants operating with millimeter precision.

What’s powering this shift?

- Vision-language-action models that translate camera input into real-world movement

- Reinforcement learning systems trained in simulation, then deployed on hardware

- Edge AI chips enabling real-time decisions without cloud latency

Skeptics call it overhyped. Yet Boston Dynamics’ warehouse robots and AI-guided farming drones prove measurable ROI. These are practical innovation signals in ai and robotics—data-backed, field-tested, and scaling fast across logistics, healthcare, and agriculture globally.

Generative AI for the Real World: The Rise of Physical Models

Generative Physical Models (GPMs) are AI systems designed to understand and simulate the real, physical world. Unlike text-based large language models (LLMs) such as ChatGPT—which predict the next word in a sentence—GPMs predict what happens next in space and time. They reason about gravity, force, motion, and object interaction. In simple terms, if an LLM writes an instruction manual, a GPM understands how to actually perform the task.

This shift matters because real-world environments are messy. Objects bend, collide, and behave unpredictably (anyone who has tried folding a fitted sheet knows this). GPMs learn these dynamics directly from data like:

- Video recordings of human tasks

- Sensor readings from robots

- 3D scans and spatial maps

Under the hood, familiar architectures power this leap. Transformers—originally built for language—now model sequences of video frames. Diffusion models, known for generating images, simulate physical state changes step by step. By training on large multimodal datasets, these systems learn patterns of cause and effect rather than just correlation.

A major breakthrough is “video-to-action.” Here, AI watches a task—like folding a shirt—and converts visual input into robotic control commands without manual programming. Instead of engineers coding every joint movement, the model infers trajectories, grip force, and timing.

In manufacturing, this reduces robot training time from weeks to hours. Companies can prototype faster, retrain systems for new products, and adapt to change with less downtime. For teams tracking innovation signals in ai and robotics, this is a clear inflection point.

Pro tip: Start with narrow, repeatable tasks when piloting GPM-based robotics. Controlled environments accelerate learning and reduce costly deployment errors.

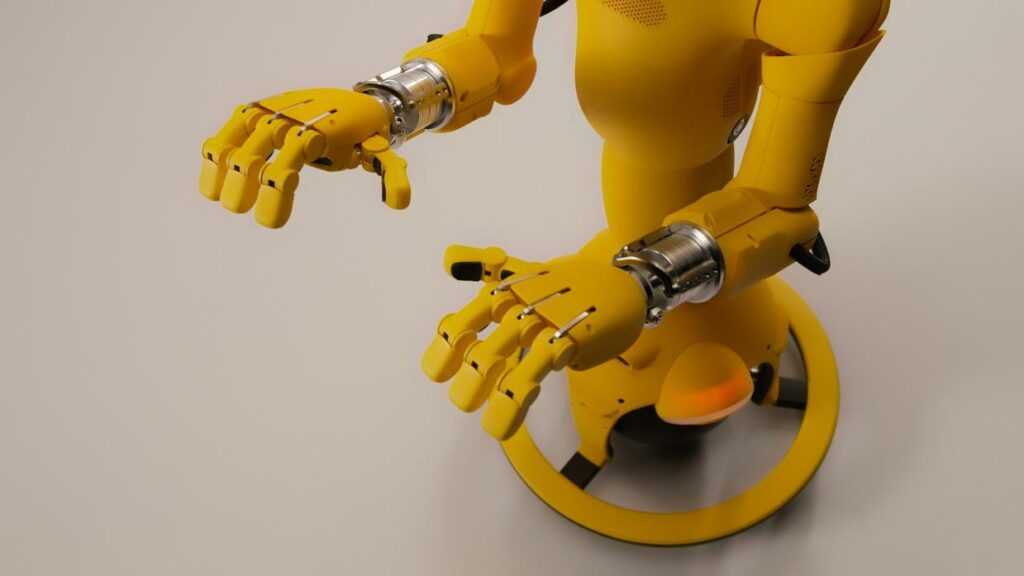

The New Workforce: How Embodied AI is Powering Humanoid Robots

For decades, robots meant one thing: repetition. Think of a traditional industrial arm spot-welding car doors on an assembly line. It performs a single programmed task, in a controlled environment, with near-perfect consistency. That’s automation—machines executing predefined instructions.

Now, however, we’re stepping into autonomy. Humanoid robots aren’t just tools; they’re adaptive systems designed to operate in messy, human-centered spaces. And in my view, that shift is far more significant than most headlines suggest (yes, even more than the latest flashy robot demo).

At the core is Embodied AI—a design philosophy where artificial intelligence is deeply integrated with a robot’s physical form. Instead of acting as a remote “brain” issuing commands, the AI continuously learns from sensors (cameras, lidar, tactile skin) and actuators (motors and joints that create movement). In simple terms, actuators are components that convert energy into motion. Because the system learns through physical interaction, it adapts to new environments—like navigating a cluttered warehouse aisle it has never seen before.

Critics argue humanoid robots are overengineered and impractical compared to specialized machines. Fair point. Wheeled robots are cheaper and often more efficient. But here’s my take: our world is built for humans—stairs, shelves, tools—so human-shaped robots reduce the need to redesign infrastructure.

Recent hardware breakthroughs make this viable. Improved battery density extends operating hours (lithium-ion advancements cited by the IEA), while enhanced tactile sensing allows delicate object handling. Meanwhile, more efficient electric actuators improve torque-to-weight ratios.

In logistics, humanoids assist with picking and palletizing. In healthcare, they support patient mobility and supply delivery. These deployments align closely with the innovation signals in ai and robotics and even echo trends outlined in future forecast industries most likely to see rapid transformation.

In short, we’re not just building smarter robots—we’re building a new workforce.

The Synergy Effect: When Advanced AI and Robotics Converge

When advanced AI meets robotics, something bigger than automation happens. We get a world model—a dynamic, predictive simulation of reality inside a machine’s “mind.” A world model is an AI system trained to anticipate outcomes before actions occur. Instead of reacting to commands, robots can forecast consequences (think less remote control, more chess grandmaster planning five moves ahead).

Some critics argue robots don’t need this complexity. Pre-programmed rules, they say, are cheaper and safer. That works—until the environment changes. A rigid system breaks when reality deviates from the script. A world model adapts.

Consider a smart factory. A fleet of robots shares a centralized predictive model of equipment status, inventory levels, and workflow timing. If a conveyor fails, robots reroute tasks automatically. If a supply shortage appears, assembly sequences adjust in real time. This isn’t basic automation; it’s coordinated anticipation. According to McKinsey, predictive maintenance alone can reduce machine downtime by up to 50% (McKinsey & Company).

Meanwhile, this convergence extends into consumer devices. Next-generation home assistants could physically reorganize spaces based on learned routines. Autonomous drones may inspect infrastructure, predict structural weaknesses, and adjust flight paths mid-mission. These are early innovation signals in ai and robotics.

Here’s the overlooked advantage: the feedback loop. Every robotic action generates fresh environmental data, refining the shared model. Performance compounds over time, creating an exponential learning curve. Competitors often focus on hardware upgrades—but intelligence scaling is the real moat.

Pro tip: The companies that unify shared predictive models across fleets—not isolated bots—will dominate the next wave.

Navigating the Next Wave of Intelligent Machines

We’ve moved from programming robots to teaching them. The convergence of generative physical AI and advanced robotic hardware marks a fundamental shift in automation. That’s not hype; it’s a redefinition of how machines learn. So what’s changed?

Previously, engineers hard-coded rules for every scenario (think factory arms repeating the same weld forever). Now, embodied AI systems learn through simulation and real-world feedback, enabling generalization—meaning the ability to apply knowledge to new tasks. Critics argue that robots still fail in messy environments, and they’re right—sometimes. However, advances in large-scale training data and simulation realism are closing that gap fast.

This is why innovation signals in ai and robotics matter now. Consider:

- Affordable dexterous grippers.

- Foundation models for motion planning.

- Open-source simulators like NVIDIA Isaac Sim and Gazebo.

Explore them, follow leading labs, and experiment; otherwise you’ll be reacting later.

Stay Ahead of the Curve in AI and Robotics

You came here to better understand the forces shaping modern technology—and now you have a clearer view of the trends, tools, and innovation signals in ai and robotics driving real change.

The pace of disruption isn’t slowing down. If anything, the gap between those who track emerging breakthroughs and those who don’t is widening. Missing early signals means missed opportunities, wasted resources, and falling behind competitors who are already adapting.

That’s why taking action now matters. Continue monitoring key innovation signals in ai and robotics, apply the frameworks you’ve explored, and integrate forward-looking strategies into your digital roadmap.

If staying ahead of rapid tech shifts feels overwhelming, you don’t have to navigate it alone. Join thousands of forward-thinking professionals who rely on our expert insights, practical tutorials, and real-time innovation alerts to stay competitive. Explore the latest updates now and position yourself at the forefront of what’s next.

Founder & Chief Executive Officer (CEO)

Velrona Durnhanna writes the kind of llusyep machine learning frameworks content that people actually send to each other. Not because it's flashy or controversial, but because it's the sort of thing where you read it and immediately think of three people who need to see it. Velrona has a talent for identifying the questions that a lot of people have but haven't quite figured out how to articulate yet — and then answering them properly.

They covers a lot of ground: Llusyep Machine Learning Frameworks, Innovation Alerts, Core Tech Concepts and Breakdowns, and plenty of adjacent territory that doesn't always get treated with the same seriousness. The consistency across all of it is a certain kind of respect for the reader. Velrona doesn't assume people are stupid, and they doesn't assume they know everything either. They writes for someone who is genuinely trying to figure something out — because that's usually who's actually reading. That assumption shapes everything from how they structures an explanation to how much background they includes before getting to the point.

Beyond the practical stuff, there's something in Velrona's writing that reflects a real investment in the subject — not performed enthusiasm, but the kind of sustained interest that produces insight over time. They has been paying attention to llusyep machine learning frameworks long enough that they notices things a more casual observer would miss. That depth shows up in the work in ways that are hard to fake.

Founder & Chief Executive Officer (CEO)

Velrona Durnhanna writes the kind of llusyep machine learning frameworks content that people actually send to each other. Not because it's flashy or controversial, but because it's the sort of thing where you read it and immediately think of three people who need to see it. Velrona has a talent for identifying the questions that a lot of people have but haven't quite figured out how to articulate yet — and then answering them properly.

They covers a lot of ground: Llusyep Machine Learning Frameworks, Innovation Alerts, Core Tech Concepts and Breakdowns, and plenty of adjacent territory that doesn't always get treated with the same seriousness. The consistency across all of it is a certain kind of respect for the reader. Velrona doesn't assume people are stupid, and they doesn't assume they know everything either. They writes for someone who is genuinely trying to figure something out — because that's usually who's actually reading. That assumption shapes everything from how they structures an explanation to how much background they includes before getting to the point.

Beyond the practical stuff, there's something in Velrona's writing that reflects a real investment in the subject — not performed enthusiasm, but the kind of sustained interest that produces insight over time. They has been paying attention to llusyep machine learning frameworks long enough that they notices things a more casual observer would miss. That depth shows up in the work in ways that are hard to fake.